It has been a while and there are now a lot new features in UrBackup Server 1.3.

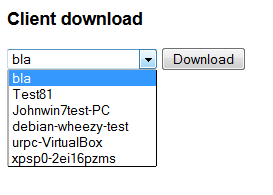

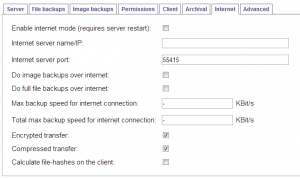

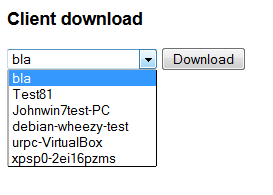

Users of the web interface can download a Client specific installer directly from the server now. The installer has the UrBackup Server information embedded, such that the client automatically connects to the server once it is installed. I’ve also published a script that connects to a UrBackup server, creates a client named like the local computer and then downloads and executes the client installer. This enables a one click setup experience for Internet clients.

Users of the web interface can download a Client specific installer directly from the server now. The installer has the UrBackup Server information embedded, such that the client automatically connects to the server once it is installed. I’ve also published a script that connects to a UrBackup server, creates a client named like the local computer and then downloads and executes the client installer. This enables a one click setup experience for Internet clients.

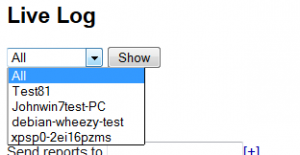

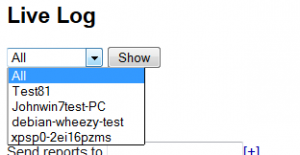

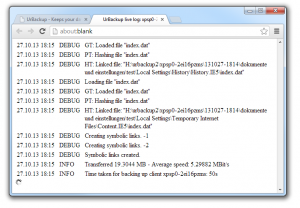

The new live log lets you see what the UrBackup server is currently busy with. You can either see all debug level log messages or client specific log messages

The new live log lets you see what the UrBackup server is currently busy with. You can either see all debug level log messages or client specific log messages .

.

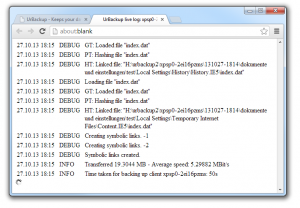

You can see which files the UrBackup Server is currently working on and the usual log messages which you can also view afterwards via web interface or on the client.

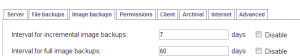

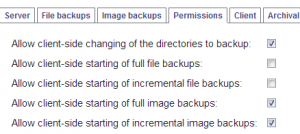

A “hidden” feature is now accessible via web interface: You can disable any type of backup for any client.

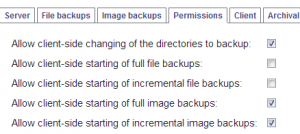

More fine grained permissions for the client allow you to prevent the users from starting full file backups, but still allow incremental file backups.

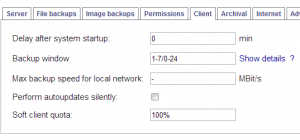

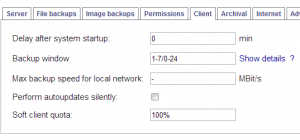

The soft client quota allows you to limit the amount of storage each client can use. During the nightly cleanup UrBackup deletes the client’s backups until the storage usage is within the bounds prescribed by the soft client quota. Other than a percentage value you can also use something like “20G” as soft client quota.

The soft client quota allows you to limit the amount of storage each client can use. During the nightly cleanup UrBackup deletes the client’s backups until the storage usage is within the bounds prescribed by the soft client quota. Other than a percentage value you can also use something like “20G” as soft client quota.

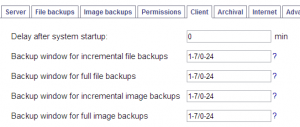

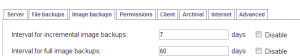

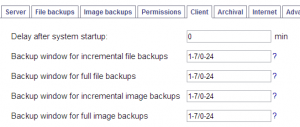

You can now have separate backup windows for incremental/full file/image backups.

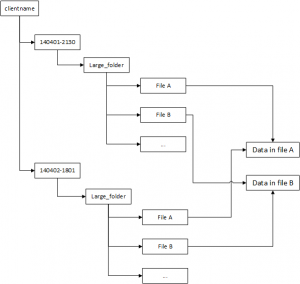

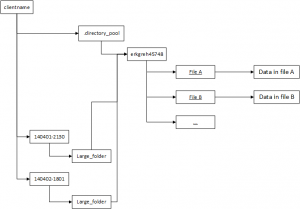

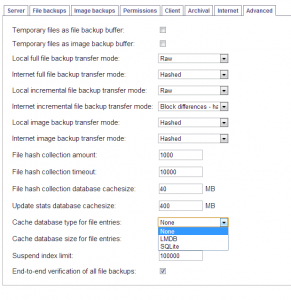

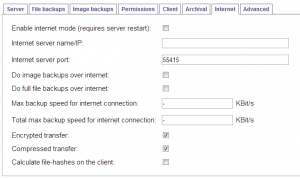

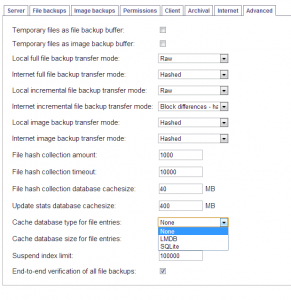

Client-side file hashes prevent the re-transfer of files that are already on the server, e.g., because another client has the same file. In some situations this drastically reduces the bandwidth requirements and speeds up file backups over Internet.

If you have performance problems with file backups the new file entry cache may help you. If the file entry cache is enabled file entries (a mapping from file hash to file paths) are cached in a separate database which may speed up backups. The caches are automatically created and destroyed if this setting is changed (and the server restarted), but creation may take a long time. LMDB makes heavy use of memory mapped files and is therefore only advisable on a 64bit operating system. It does also create a very large sparse file on Windows. When in doubt use the SQLite cache.